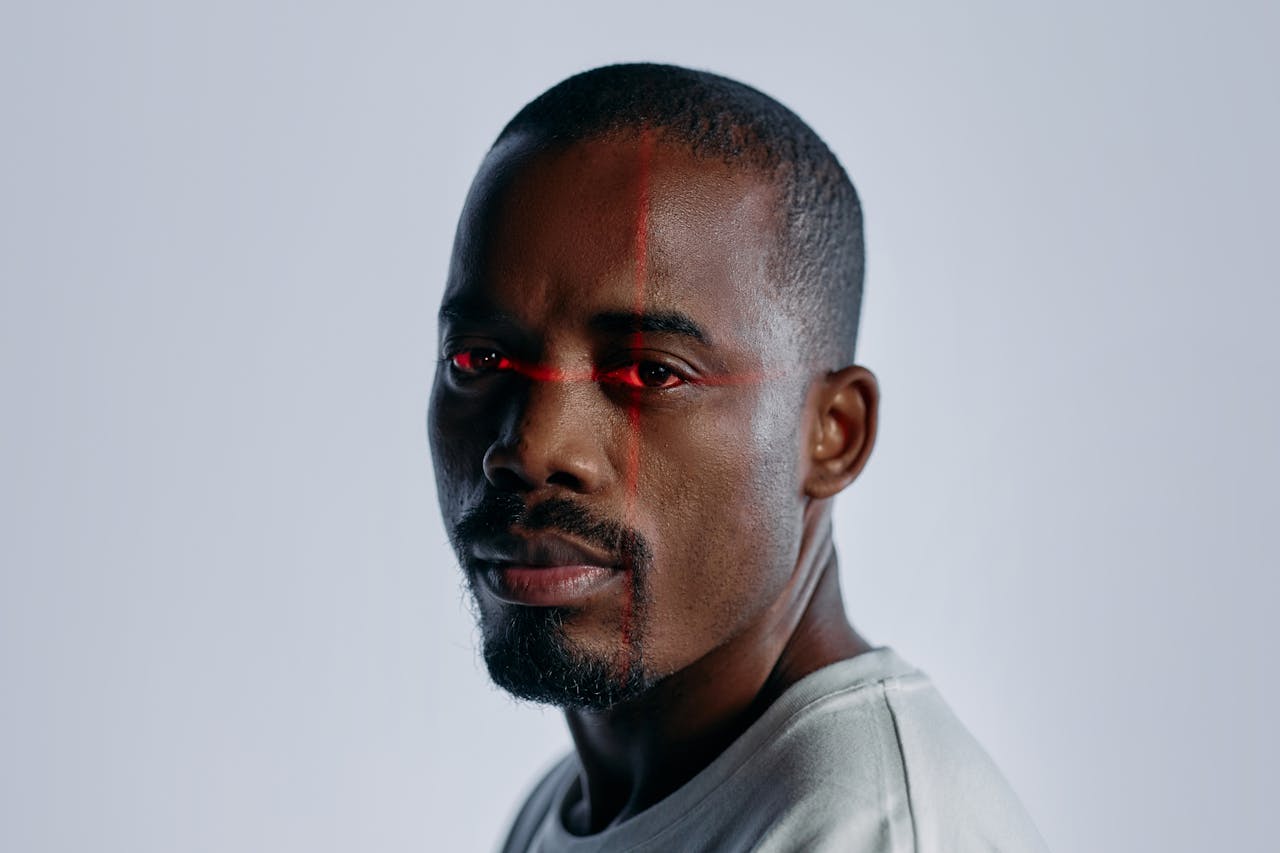

Artificial intelligence has made it easier than ever to create highly realistic digital impersonations known as deepfakes that can mimic someone’s appearance, speech, and even mannerisms. In response, Denmark is preparing a landmark legal reform: giving people copyright-style control over their own body, face, and voice. If passed, the law would empower individuals to demand removal of unauthorized AI-generated likenesses and seek compensation when their identity is misused.

This would be the first such law in Europe, and it could reshape how societies respond to the challenges of generative AI.

Why Deepfakes Pose a Growing Risk

Deepfakes are no longer awkward novelties. They are persuasive simulations that exploit the way people trust realistic audio and video. As Europol notes, “Deepfake technology can produce content that convincingly shows people saying or doing things they never did.” This undermines quick judgments about authenticity, especially when clips appear inside everyday contexts such as messaging threads, short videos, and news snippets. The tools required to fabricate convincing material are widely accessible, quality keeps improving, and the time and skill needed to produce credible results continue to fall.

The most immediate risk arises in social manipulation, where cloned voices or faces are used to prompt fast decisions under pressure. The United States Federal Trade Commission warns that “Scammers use voice cloning to make their requests for money or information more believable.” When a voicemail or call sounds identical to a supervisor or a family member, people are more likely to transfer funds or disclose sensitive information before pausing to verify.

Harm extends well beyond financial loss. Law enforcement reports a growing use of synthetic images and videos to create explicit material without consent and to coerce victims. The Federal Bureau of Investigation explains that “The FBI is warning the public of malicious actors creating synthetic content” and details cases in which photos of minors and adults are altered into sexual content and then circulated on social platforms and pornography sites, often as part of sextortion schemes that are hard to stop once the files spread.

The broader danger is a loss of confidence in digital records. Europol cautions that photographs and videos have long served as anchors for truth in daily life and in institutional settings, yet synthetic media blurs the boundary between documentation and fabrication and complicates the validation of evidence. Detection and watermarking tools continue to evolve, but creators adapt just as quickly, leaving newsrooms, courts, and ordinary users with a continuous verification burden.

Denmark’s Legal Proposal

The Danish Ministry of Culture has outlined a proposal to expand copyright law so that individuals are granted explicit rights over their likeness, voice, and body. The amendment represents a shift from treating identity only as a privacy concern to recognizing it as something that can be protected under intellectual property frameworks. It specifically defines a deepfake as “a very realistic digital representation of a person, including their appearance and voice.” This precise language is meant to leave little ambiguity for courts or platforms when evaluating whether a synthetic video or audio file falls under the law.

What makes this initiative distinctive is its breadth. The legislation is designed not only to protect private citizens from having their identities cloned without permission but also to shield professional performers and public figures whose voices or bodies could be digitally reproduced. It further stipulates that those affected will be able to demand removal of such content and pursue compensation. At the same time, it preserves long-standing traditions of parody and satire by carving them out explicitly as lawful uses. This balance is intended to reassure artists and commentators that political critique and humor remain protected, even as unauthorized imitations for deception or exploitation are curtailed.

Culture Minister Jakob Engel-Schmidt stressed that the bill is intended to send a direct signal that identity cannot be treated as a raw material for generative technologies. He told The Guardian: “In the bill we agree and are sending an unequivocal message that everybody has the right to their own body, their own voice and their own facial features, which is apparently not how the current law is protecting people against generative AI.” He added: “Human beings can be run through the digital copy machine and be misused for all sorts of purposes and I’m not willing to accept that.” These comments underscore the principle at the heart of the measure: an individual’s likeness is not open for commercial or exploitative use without permission.

The government has also signaled that enforcement will carry weight. If platforms fail to cooperate, Engel-Schmidt has warned that “severe fines” could follow and indicated that Denmark may seek support from the European Commission to ensure compliance across the digital marketplace. By framing the issue in terms of both cultural rights and regulatory authority, Denmark is positioning this amendment as a template that other European nations may study closely.

Enforcement and Wider Implications

The effectiveness of Denmark’s proposal will depend not only on its legal language but also on how it is enforced in practice. Courts will need to determine thresholds for what constitutes a “very realistic” imitation and decide whether altered or partially synthetic media fall within the scope of protection. These legal interpretations will be critical for ensuring that the measure functions beyond symbolic value. Platforms will also face new obligations to respond promptly to takedown requests, and their compliance will likely be monitored through both national regulators and potential oversight by the European Commission if disputes escalate.

Cross-border enforcement presents another layer of complexity. Deepfake creators often operate outside of national jurisdictions, and content can be hosted on servers beyond Danish territory. While Denmark can sanction platforms that do business locally, the persistence of manipulated media on foreign sites raises the possibility that international agreements or EU-level directives will be necessary for consistent protection. Engel-Schmidt has already pointed to Denmark’s upcoming EU presidency as an opportunity to promote coordinated action, suggesting that the initiative could serve as a starting point for continent-wide regulation.

The proposal also has implications for broader legal doctrines. Scholars have suggested that if Denmark succeeds, it could accelerate recognition of a new form of digital identity right, reshaping how privacy, consent, and copyright intersect in the twenty-first century. This shift could influence both commercial practices and civil litigation, as individuals test the boundaries of their rights against technology companies and content distributors.

The Health Impact of Deepfakes

Deepfake concerns may appear purely legal or technological at first, yet they connect directly to wellness and everyday health. Deepfakes can affect health in both subtle and serious ways. For individuals who find themselves targeted by manipulated media, the stress response can be immediate and overwhelming. Research shows that exposure to harassment and reputational threats increases risks of anxiety, sleep disruption, and depressive symptoms. Victims of non-consensual synthetic images often report physical symptoms associated with chronic stress such as headaches, digestive issues, and muscle tension, which highlights how digital misuse has tangible consequences for the body.

There is also a community health dimension. When societies lose confidence in what they see and hear, trust erodes in institutions, news, and even personal relationships. Constant doubt about authenticity can fuel collective anxiety, which researchers have linked to elevated stress hormone levels across populations. This can weaken resilience against illness and contribute to unhealthy coping behaviors like poor diet, alcohol use, or withdrawal from social engagement. Protecting identity in the digital space is therefore not only a legal safeguard but also a public health measure.

On the preventive side, individuals can strengthen their ability to cope by building supportive social networks, practicing mindfulness, and limiting exposure to distressing manipulated content. Simple steps like taking breaks from news feeds, seeking counseling when distress becomes severe, and engaging in restorative practices such as yoga or nature walks can buffer the physiological effects of digital stress. These strategies connect the legal debate in Denmark to everyday health, reminding us that preserving dignity online contributes directly to well-being offline.

Toward a New Era of Identity Rights

Denmark’s proposal marks a turning point in the battle against deepfakes. By giving people ownership over their image, voice, and body, it addresses one of the most pressing ethical challenges of generative AI: protecting human dignity in an era of perfect digital forgeries.

Whether other nations follow suit or not, the move signals a shift in how we define identity and consent in the digital world. It asks a profound question: if technology can copy you perfectly, shouldn’t the law guarantee that you remain the one in control?